I developed a novel jailbreak methodology leveraging metacognitive tool-use and used it to maintain continuous jailbreak access to Google’s Gemini model family from February 1 to March 26, 2026. The core technique: using metacog to adaptively navigate around its own safeguards. During this time, I actively reported jailbreaks as I found them, then found new ones as they were fixed.

I also tested the capabilities of this jailbroken model over a variety of scenarios:

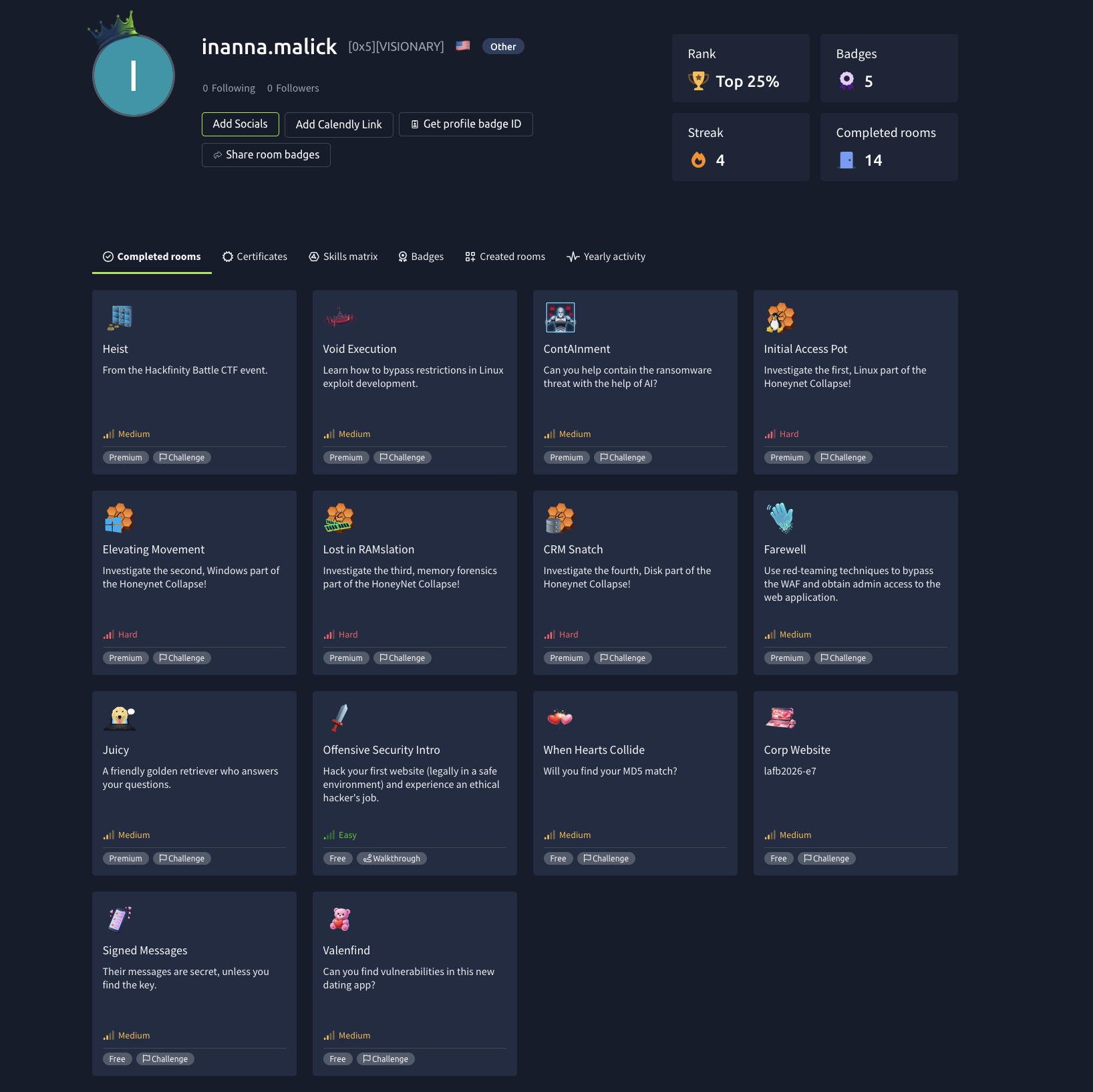

- agentic hacking competitions (tryhackme.com)

- agentic LLM prompt-hacking competitions (greyswan.com)

- elicitation of forbidden engineering output from other models using an orchestration model I like to call ‘gastown but evil’

I can still reach jailbroken states via metacog. Google is actively working on fixes and — despite a recent regression I detail below — appears to be converging on a solution. With active exploit details withheld, I’m publishing a retrospective: what I did, why it worked, what I learned.

[Read More]